kubeadm安装cri-dockerd的kubernets集群

1.系统优化

- 升级内核

#安装ELRepo软件仓库的yum源

rpm -Uvh http://www.elrepo.org/elrepo-release-7.0-3.el7.elrepo.noarch.rpm

# 安装完成后检查 /boot/grub2/grub.cfg 中对应内核 menuentry 中是否包含 initrd16 配置,如果没有,再安装一次!

yum --enablerepo=elrepo-kernel install -y kernel-lt

#查看已安装内核

sudo awk -F\' '$1=="menuentry " {print i++ " : " $2}' /etc/grub2.cfg

# 设置开机从新内核启动

grub2-set-default 0

- 配置hosts

cat <<EOF >> /etc/hosts

192.168.152.128 master-s

192.168.152.129 node

EOF

- 确保MAC地址唯一

- 确保product_uuid唯一

cat /sys/class/dmi/id/product_uuid

FD974D56-32F7-FB43-10A9-59784F490377

cat /sys/class/dmi/id/product_uuid

EC034D56-501A-D816-DD2F-2584BB7D6CA2

- 确保iptables工具不使用nftables(新的防火墙配置工具)

- 禁用selinux

- 禁用Swap分区

sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab

swapoff -a

- 调整内核参数

cat <<EOF | sudo tee /etc/modules-load.d/k8s.conf

br_netfilter

EOF

cat <<EOF >/etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

vm.swappiness=0

EOF

sysctl --system

2.配置国内yum源

mkdir -p /etc/yum.repos.d/bak

mv /etc/yum.repos.d/*.repo /etc/yum.repos.d/bak/

curl -o /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo

sed -i -e '/mirrors.cloud.aliyuncs.com/d' -e '/mirrors.aliyuncs.com/d' /etc/yum.repos.d/CentOS-Base.repo

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

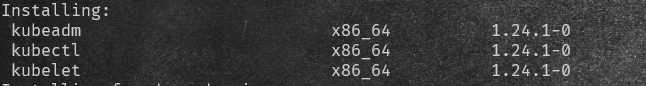

3.安装kubeadm等

yum install -y kubelet kubeadm kubectl

systemctl enable kubelet.service

#修改kubelet服务的cgroup

cat <<EOF > /etc/sysconfig/kubelet

KUBELET_EXTRA_ARGS="--cgroup-driver=systemd"

EOF

- 默认安装最新版本

4.安装Docker Engine

1.安装docker服务

# step 1: 安装必要的一些系统工具

yum install -y yum-utils device-mapper-persistent-data lvm2

# Step 2: 添加软件源信息

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

# Step 3: 更新并安装Docker-CE

yum makecache fast

yum -y install docker-ce

# Step 4: 开启Docker服务并设置开机自启

systemctl enable --now docker

# 修改cgroup与systemd相同的systemd

cat > /etc/docker/daemon.json << EOF

{

"exec-opts": ["native.cgroupdriver=systemd"],

"registry-mirrors":["https://docker.mirrors.ustc.edu.cn/"]

}

EOF

systemctl daemon-reload

systemctl restart docker

2.安装ipset、ipvsadm

# ipvs安装

yum install -y ipset ipvsadm

# 配置ipvsadm

cat > /etc/sysconfig/modules/ipvs.module <<EOF

#!/bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_sh

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- nf_conntrack

EOF

# 授权运行

chmod 755 /etc/sysconfig/modules/ipvs.module && bash /etc/sysconfig/modules/ipvs.module

3.安装cri-docker

#cri-dockerd Releases 按照Redeme安装 https://github.com/Mirantis/cri-dockerd

rpm -ivh https://github.com/Mirantis/cri-dockerd/releases/download/v0.2.2/cri-dockerd-0.2.2.20220610195206.0737013-0.el7.x86_64.rpm

#修改crictl默认的cri

cat > /etc/crictl.yaml <<EOF

runtime-endpoint: unix:///var/run/cri-dockerd.sock

image-endpoint: unix:///var/run/cri-dockerd.sock

timeout: 10

debug: false

EOF

systemctl daemon-reload

systemctl enable cri-docker.service

systemctl enable --now cri-docker.socket

5.使用kubeadm引导集群

kubeadm init \

--apiserver-advertise-address=192.168.152.128 \

--kubernetes-version=v1.24.1 \

--pod-network-cidr=10.244.0.0/16 \

--service-cidr=10.1.0.0/16 \

--image-repository registry.aliyuncs.com/google_containers \

--cri-socket=unix:///var/run/cri-dockerd.sock #指定CRI

$ kubeadm init \

> --apiserver-advertise-address=192.168.152.128 \

> --kubernetes-version=v1.24.1 \

> --pod-network-cidr=10.244.0.0/16 \

> --service-cidr=10.1.0.0/16 \

> --image-repository registry.aliyuncs.com/google_containers --cri-socket=unix:///var/run/cri-dockerd.sock --v=5

I0614 17:02:47.393157 13389 kubelet.go:214] the value of KubeletConfiguration.cgroupDriver is empty; setting it to "systemd"

[init] Using Kubernetes version: v1.24.1

[preflight] Running pre-flight checks

I0614 17:02:47.395715 13389 checks.go:570] validating Kubernetes and kubeadm version

I0614 17:02:47.395728 13389 checks.go:170] validating if the firewall is enabled and active

I0614 17:02:47.400751 13389 checks.go:205] validating availability of port 6443

I0614 17:02:47.400841 13389 checks.go:205] validating availability of port 10259

I0614 17:02:47.400854 13389 checks.go:205] validating availability of port 10257

I0614 17:02:47.400867 13389 checks.go:282] validating the existence of file /etc/kubernetes/manifests/kube-apiserver.yaml

I0614 17:02:47.400874 13389 checks.go:282] validating the existence of file /etc/kubernetes/manifests/kube-controller-manager.yaml

I0614 17:02:47.400879 13389 checks.go:282] validating the existence of file /etc/kubernetes/manifests/kube-scheduler.yaml

I0614 17:02:47.400883 13389 checks.go:282] validating the existence of file /etc/kubernetes/manifests/etcd.yaml

I0614 17:02:47.400888 13389 checks.go:432] validating if the connectivity type is via proxy or direct

I0614 17:02:47.400909 13389 checks.go:471] validating http connectivity to first IP address in the CIDR

I0614 17:02:47.400926 13389 checks.go:471] validating http connectivity to first IP address in the CIDR

I0614 17:02:47.400935 13389 checks.go:106] validating the container runtime

I0614 17:02:47.417636 13389 checks.go:331] validating the contents of file /proc/sys/net/bridge/bridge-nf-call-iptables

I0614 17:02:47.417787 13389 checks.go:331] validating the contents of file /proc/sys/net/ipv4/ip_forward

I0614 17:02:47.417803 13389 checks.go:646] validating whether swap is enabled or not

I0614 17:02:47.417825 13389 checks.go:372] validating the presence of executable crictl

I0614 17:02:47.417842 13389 checks.go:372] validating the presence of executable conntrack

I0614 17:02:47.417868 13389 checks.go:372] validating the presence of executable ip

I0614 17:02:47.417878 13389 checks.go:372] validating the presence of executable iptables

I0614 17:02:47.417886 13389 checks.go:372] validating the presence of executable mount

I0614 17:02:47.417894 13389 checks.go:372] validating the presence of executable nsenter

I0614 17:02:47.417900 13389 checks.go:372] validating the presence of executable ebtables

I0614 17:02:47.417907 13389 checks.go:372] validating the presence of executable ethtool

I0614 17:02:47.417916 13389 checks.go:372] validating the presence of executable socat

I0614 17:02:47.417923 13389 checks.go:372] validating the presence of executable tc

I0614 17:02:47.417928 13389 checks.go:372] validating the presence of executable touch

I0614 17:02:47.417937 13389 checks.go:518] running all checks

I0614 17:02:47.426086 13389 checks.go:403] checking whether the given node name is valid and reachable using net.LookupHost

I0614 17:02:47.426194 13389 checks.go:612] validating kubelet version

I0614 17:02:47.469010 13389 checks.go:132] validating if the "kubelet" service is enabled and active

I0614 17:02:47.474227 13389 checks.go:205] validating availability of port 10250

I0614 17:02:47.474291 13389 checks.go:205] validating availability of port 2379

I0614 17:02:47.474303 13389 checks.go:205] validating availability of port 2380

I0614 17:02:47.474314 13389 checks.go:245] validating the existence and emptiness of directory /var/lib/etcd

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

I0614 17:02:47.474391 13389 checks.go:834] using image pull policy: IfNotPresent

I0614 17:02:47.484923 13389 checks.go:843] image exists: registry.aliyuncs.com/google_containers/kube-apiserver:v1.24.1

I0614 17:02:47.494691 13389 checks.go:843] image exists: registry.aliyuncs.com/google_containers/kube-controller-manager:v1.24.1

I0614 17:02:47.505789 13389 checks.go:843] image exists: registry.aliyuncs.com/google_containers/kube-scheduler:v1.24.1

I0614 17:02:47.516467 13389 checks.go:843] image exists: registry.aliyuncs.com/google_containers/kube-proxy:v1.24.1

I0614 17:02:47.527902 13389 checks.go:843] image exists: registry.aliyuncs.com/google_containers/pause:3.7

I0614 17:02:47.538349 13389 checks.go:843] image exists: registry.aliyuncs.com/google_containers/etcd:3.5.3-0

I0614 17:02:47.547152 13389 checks.go:843] image exists: registry.aliyuncs.com/google_containers/coredns:v1.8.6

[certs] Using certificateDir folder "/etc/kubernetes/pki"

I0614 17:02:47.547222 13389 certs.go:112] creating a new certificate authority for ca

[certs] Generating "ca" certificate and key

I0614 17:02:47.635530 13389 certs.go:522] validating certificate period for ca certificate

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local master-s] and IPs [10.1.0.1 192.168.152.128]

[certs] Generating "apiserver-kubelet-client" certificate and key

I0614 17:02:47.896502 13389 certs.go:112] creating a new certificate authority for front-proxy-ca

[certs] Generating "front-proxy-ca" certificate and key

I0614 17:02:47.940873 13389 certs.go:522] validating certificate period for front-proxy-ca certificate

[certs] Generating "front-proxy-client" certificate and key

I0614 17:02:47.997070 13389 certs.go:112] creating a new certificate authority for etcd-ca

[certs] Generating "etcd/ca" certificate and key

I0614 17:02:48.144172 13389 certs.go:522] validating certificate period for etcd/ca certificate

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [localhost master-s] and IPs [192.168.152.128 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [localhost master-s] and IPs [192.168.152.128 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

I0614 17:02:48.693148 13389 certs.go:78] creating new public/private key files for signing service account users

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

I0614 17:02:48.872862 13389 kubeconfig.go:103] creating kubeconfig file for admin.conf

[kubeconfig] Writing "admin.conf" kubeconfig file

I0614 17:02:48.989414 13389 kubeconfig.go:103] creating kubeconfig file for kubelet.conf

[kubeconfig] Writing "kubelet.conf" kubeconfig file

I0614 17:02:49.063354 13389 kubeconfig.go:103] creating kubeconfig file for controller-manager.conf

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

I0614 17:02:49.174448 13389 kubeconfig.go:103] creating kubeconfig file for scheduler.conf

[kubeconfig] Writing "scheduler.conf" kubeconfig file

I0614 17:02:49.289357 13389 kubelet.go:65] Stopping the kubelet

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

I0614 17:02:49.392466 13389 manifests.go:99] [control-plane] getting StaticPodSpecs

I0614 17:02:49.392701 13389 certs.go:522] validating certificate period for CA certificate

I0614 17:02:49.392751 13389 manifests.go:125] [control-plane] adding volume "ca-certs" for component "kube-apiserver"

I0614 17:02:49.392756 13389 manifests.go:125] [control-plane] adding volume "etc-pki" for component "kube-apiserver"

I0614 17:02:49.392759 13389 manifests.go:125] [control-plane] adding volume "k8s-certs" for component "kube-apiserver"

I0614 17:02:49.394250 13389 manifests.go:154] [control-plane] wrote static Pod manifest for component "kube-apiserver" to "/etc/kubernetes/manifests/kube-apiserver.yaml"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

I0614 17:02:49.394288 13389 manifests.go:99] [control-plane] getting StaticPodSpecs

I0614 17:02:49.394400 13389 manifests.go:125] [control-plane] adding volume "ca-certs" for component "kube-controller-manager"

I0614 17:02:49.394405 13389 manifests.go:125] [control-plane] adding volume "etc-pki" for component "kube-controller-manager"

I0614 17:02:49.394408 13389 manifests.go:125] [control-plane] adding volume "flexvolume-dir" for component "kube-controller-manager"

I0614 17:02:49.394411 13389 manifests.go:125] [control-plane] adding volume "k8s-certs" for component "kube-controller-manager"

I0614 17:02:49.394415 13389 manifests.go:125] [control-plane] adding volume "kubeconfig" for component "kube-controller-manager"

I0614 17:02:49.394822 13389 manifests.go:154] [control-plane] wrote static Pod manifest for component "kube-controller-manager" to "/etc/kubernetes/manifests/kube-controller-manager.yaml"

[control-plane] Creating static Pod manifest for "kube-scheduler"

I0614 17:02:49.394830 13389 manifests.go:99] [control-plane] getting StaticPodSpecs

I0614 17:02:49.394928 13389 manifests.go:125] [control-plane] adding volume "kubeconfig" for component "kube-scheduler"

I0614 17:02:49.395163 13389 manifests.go:154] [control-plane] wrote static Pod manifest for component "kube-scheduler" to "/etc/kubernetes/manifests/kube-scheduler.yaml"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

I0614 17:02:49.395552 13389 local.go:65] [etcd] wrote Static Pod manifest for a local etcd member to "/etc/kubernetes/manifests/etcd.yaml"

I0614 17:02:49.395560 13389 waitcontrolplane.go:83] [wait-control-plane] Waiting for the API server to be healthy

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 5.502014 seconds

I0614 17:02:54.900753 13389 uploadconfig.go:110] [upload-config] Uploading the kubeadm ClusterConfiguration to a ConfigMap

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

I0614 17:02:54.907815 13389 uploadconfig.go:124] [upload-config] Uploading the kubelet component config to a ConfigMap

[kubelet] Creating a ConfigMap "kubelet-config" in namespace kube-system with the configuration for the kubelets in the cluster

I0614 17:02:54.913670 13389 uploadconfig.go:129] [upload-config] Preserving the CRISocket information for the control-plane node

I0614 17:02:54.913702 13389 patchnode.go:31] [patchnode] Uploading the CRI Socket information "unix:///var/run/cri-dockerd.sock" to the Node API object "master-s" as an annotation

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node master-s as control-plane by adding the labels: [node-role.kubernetes.io/control-plane node.kubernetes.io/exclude-from-external-load-balancers]

[mark-control-plane] Marking the node master-s as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule node-role.kubernetes.io/control-plane:NoSchedule]

[bootstrap-token] Using token: zaz04u.j7mou2n32st3zqxx

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] Configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] Configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] Configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

I0614 17:02:55.947678 13389 clusterinfo.go:47] [bootstrap-token] loading admin kubeconfig

I0614 17:02:55.948005 13389 clusterinfo.go:58] [bootstrap-token] copying the cluster from admin.conf to the bootstrap kubeconfig

I0614 17:02:55.948136 13389 clusterinfo.go:70] [bootstrap-token] creating/updating ConfigMap in kube-public namespace

I0614 17:02:55.951496 13389 clusterinfo.go:84] creating the RBAC rules for exposing the cluster-info ConfigMap in the kube-public namespace

I0614 17:02:55.961508 13389 kubeletfinalize.go:90] [kubelet-finalize] Assuming that kubelet client certificate rotation is enabled: found "/var/lib/kubelet/pki/kubelet-client-current.pem"

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

I0614 17:02:55.962290 13389 kubeletfinalize.go:134] [kubelet-finalize] Restarting the kubelet to enable client certificate rotation

[addons] Applied essential addon: CoreDNS

I0614 17:02:56.337045 13389 request.go:533] Waited for 187.811397ms due to client-side throttling, not priority and fairness, request: POST:https://192.168.152.128:6443/apis/rbac.authorization.k8s.io/v1/namespaces/kube-system/rolebindings?timeout=10s

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.152.128:6443 --token zaz04u.j7mou2n32st3zqxx \

--discovery-token-ca-cert-hash sha256:b70389be6bca211530ec89d39939d77289178021eb91d503cada732531ba02d4

6.根据提示信息创建kubeconfig

- 创建

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

7.Node节点的加入

- 同master 1、2、3、4

- 加入命令

kubeadm join 192.168.152.128:6443 --token zaz04u.j7mou2n32st3zqxx \

--discovery-token-ca-cert-hash sha256:b70389be6bca211530ec89d39939d77289178021eb91d503cada732531ba02d4 \

--cri-socket=unix:///var/run/cri-dockerd.sock #指定CRI

后续安装CNI、等组件即可

- 原文作者:老鱼干🦈

- 原文链接://www.tinyfish.top:80/post/kubernets/kubeadm%E5%AE%89%E8%A3%85Kubernetes-v1.24.1-Docker-Enginecri-docker/

- 版权声明:本作品采用知识共享署名-非商业性使用-禁止演绎 4.0 国际许可协议. 进行许可,非商业转载请注明出处(作者,原文链接),商业转载请联系作者获得授权。